virtual switch (vSwitch)

What is a virtual switch (vSwitch)?

A virtual switch (vSwitch) is a software program that enables one virtual machine (VM) to communicate with another. Virtual switches are also used to establish connections between virtual and physical networks and to carry a VM's traffic to other VMs or a physical network.

It is the relationship between virtual switches, VMs and physical network adapters that enables VMs to access and operate on Ethernet networks. Virtual switches ensure nearly the same functions as regular switches -- with the exception of some advanced functionalities that appear in physical switches, like the ability to create network loops.

Just like its counterpart, the physical Ethernet switch, a virtual switch does more than just forward data packets. It can intelligently direct communication on a network by inspecting packets before passing them on. Some vendors embed virtual switches into their virtualization software, but a virtual switch can also be included in a server's hardware as part of its firmware.

How does a virtual switch work?

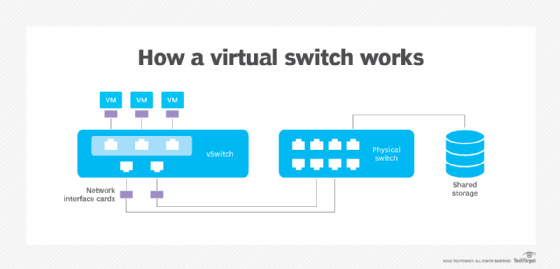

Virtual switches connect to VMs in a similar way as physical switches. VMs use virtual switches and virtual network adapters to connect to physical networks. A virtual switch also connects to a network interface card in order to connect to a physical network.

A virtual switch detects which VMs are logically connected to its virtual ports and forwards network traffic to the VM. The virtual switch directs data on a network by checking data packets before moving the packet's destination.

Virtual switches reduce the complexity of network configurations by decreasing the number of physical switches that would otherwise need to be managed. With additional network and security settings, these switches also provide integrity for VMs.

Uses for virtual switches

Virtual switches are used for various reasons, but are typically used for ensuring a connection between VMs or connecting virtual and physical networks. Virtual switches can also be used to ensure the integrity of a VM's profile -- including its network and security settings -- as the VM is migrated across physical hosts on the network.

The following virtual switches have different stated use cases:

- Open vSwitch, a Linux Foundation collaborative project licensed under Apache, is designed to support large production environments with multiple physical servers for large-scale network automation. It is also designed to handle standard management interfaces and protocols.

- Hyper-V Virtual Switch is a virtual switch in Microsoft Hyper-V that connects VMs to virtual and physical networks that are external to the Hyper-V host. The Hyper-V switch also provides policy enforcement for security, isolation and service levels. The switch can be used for traffic shaping and protecting against malicious VMs. The switch can also be useful for independent software vendors in creating virtual switch extension plugins.

- VMware vSwitches are used mainly to ensure a connection between VMs. They can also connect virtual and physical networks. In addition, these switches can be used to connect storage to VMware ESXi hosts or fault tolerance logging networks, and for live migrations of VMs between ESXi hosts.

Advantages of using a virtual switch

Virtual switches offer the following advantages:

- Manageability. Network administrators can manage deployed virtual switches through a hypervisor.

- Functionality. Compared with physical switches, it is easy to produce new functionalities for virtual switches.

- Enhanced security. Virtual switches provide policy enforcement for security.

- Ability to isolate. Private virtual switches can completely isolate the VM, which is useful for carrying out different tests in an isolated environment.

Types of virtual switches

There are numerous types of virtual switches, including the following:

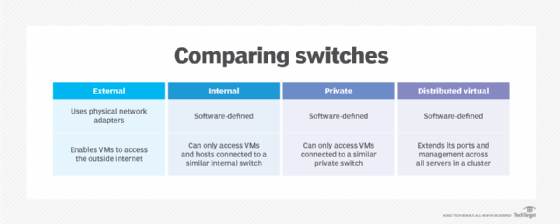

- External virtual switches. This switch type is bound to a physical network card and provides connected VMs with physical external network access. VMs connected to a common external virtual switch can communicate with one another. The host operating system can also communicate across external virtual switches.

- Internal virtual switches. Unlike an external virtual switch, an internal virtual switch is not linked to a physical network adapter. Instead, networks are tied together by an internal virtual switch that is entirely software-defined. Hosts connected to an internal virtual switch can communicate with one another as well as with VMs that are already connected to the internal virtual switch. But VMs connected to an internal virtual switch are unable to connect to the internet or access network resources not connected to the internal virtual switch. This is useful for creating isolated environments that still are able to control the hypervisor host.

- Private virtual switches. A private virtual switch completely isolates the VM. VMs connected to a private virtual switch network can communicate with one another, but they cannot communicate with any resources outside the private virtual switch.

- Distributed virtual switches. While virtual switches can handle many VMs on a host, standard virtual switches do not extend beyond a single host. distributed virtual switches help to meet the switch demands of clustered virtualized hosts by enabling the cluster nodes to share the same switch across nodes.

Learn more about both physical and virtual switches as well as how they both work while configuring them with VMs.