Ethernet

What is Ethernet?

Ethernet is the traditional technology for connecting devices in a wired local area network (LAN) or wide area network. It enables devices to communicate with each other via a protocol, which is a set of rules or common network language.

Ethernet describes how network devices format and transmit data so other devices on the same LAN or campus network can recognize, receive and process the information. An Ethernet cable is the physical, encased wiring over which the data travels.

Connected devices that use cables to access a geographically localized network -- instead of a wireless connection -- likely use Ethernet. From businesses to gamers, diverse end users rely on the benefits of Ethernet connectivity, which include reliability and security.

Compared to wireless LAN (WLAN) technology, Ethernet is typically less vulnerable to disruptions. It can also offer a greater degree of network security and control than wireless technology because devices must connect using physical cabling. This makes it difficult for outsiders to access network data or hijack bandwidth for unsanctioned devices.

Why is Ethernet used?

Ethernet is used to connect devices in a network and is still a popular form of network connection. Specific organizations with local networks, such as company offices, school campuses and hospitals, use Ethernet for its high speed, security and reliability.

Ethernet initially grew popular due to its inexpensive price tag when compared to the competing technology of the time, such as IBM's token ring. As network technology advanced, Ethernet's ability to evolve and deliver higher levels of performance ensured its sustained popularity. Throughout its evolution, Ethernet also maintained backward compatibility.

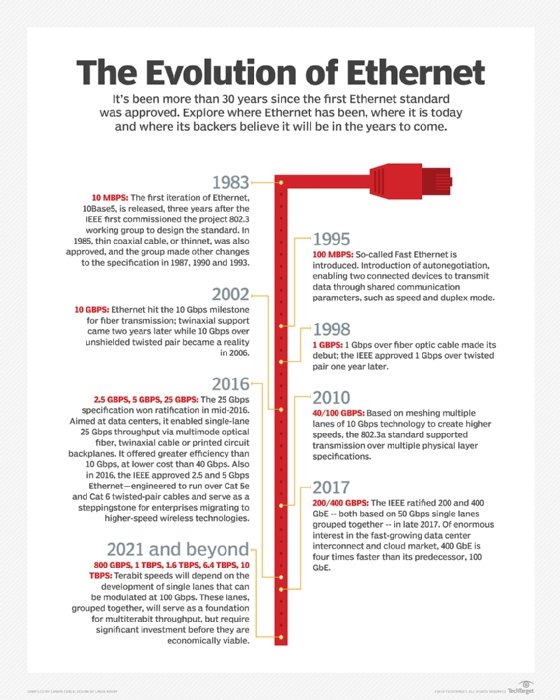

Ethernet's original 10 megabits per second throughput increased tenfold to 100 Mbps in the mid-1990s. IEEE continues to deliver increased performance with successive updates. Current versions of Ethernet support operations up to 400 gigabits per second (Gbps).

Advantages of Ethernet

Ethernet has many benefits for users, which is why it grew so popular. Here are some of the common benefits of Ethernet:

- Relatively low cost.

- Backward compatibility.

- Generally resistant to noise.

- Good data transfer quality.

- Speed.

- Reliability.

- Data security, as common firewalls can be used.

Disadvantages of Ethernet

Despite its widespread use, Ethernet does have its share of disadvantages, such as the following:

- Intended for smaller, shorter-distance networks.

- Limited mobility.

- Use of longer cables can create cross-talk.

- Doesn't work well with real-time or interactive applications.

- Speeds decrease with increased traffic.

- Receivers don't acknowledge the reception of data packets.

- Troubleshooting is hard when trying to trace which specific cable or node is causing the issue.

Ethernet vs. Wi-Fi

Wi-Fi is the most popular type of network connection. Unlike wired connection types, such as Ethernet, it does not require a physical cable to be connected. Instead, data is transmitted through wireless signals.

Below are some of the major differences between Ethernet and Wi-Fi connections.

Ethernet connections

- Transmit data over a cable.

- Limited mobility, as a physical cable is required.

- More speed, reliability and security than Wi-Fi.

- Consistent speed.

- Data encryption is not required.

- Lower latency.

- More complex installation process.

Wi-Fi connections

- Transmit data through wireless signals rather than over a cable.

- Better mobility, as no cables are required.

- Not as fast, reliable or secure as Ethernet.

- More convenient because users can connect to the internet from anywhere.

- Inconsistent speed, as Wi-Fi is prone to signal interference.

- Require data encryption.

- Higher latency than Ethernet.

- Simpler installation process.

How Ethernet works

IEEE specifies in the IEEE 802.3 family of standards that the Ethernet protocol touches both Layer 1 (physical layer) and Layer 2 (data link layer) on the Open Systems Interconnection model.

Ethernet defines two units of transmission: packet and frame. The frame includes the payload of data being transmitted, as well as the following:

- The physical media access control addresses of both the sender and receiver.

- Virtual LAN (VLAN) tagging and quality of service information.

- Error correction information to detect transmission problems.

Each frame is wrapped in a packet that contains several bytes of information to establish the connection and mark where the frame starts.

Engineers at Xerox first developed Ethernet in the 1970s. Ethernet initially ran over coaxial cables. Early Ethernet connected multiple devices into network segments through hubs -- Layer 1 devices responsible for transporting network data -- using either a daisy chain or star topology. Currently, a typical Ethernet LAN uses special grades of twisted-pair cables or fiber optic cabling.

If two devices that share a hub try to transmit data at the same time, the packets can collide and create connectivity problems. To alleviate these digital traffic jams, IEEE developed the Carrier Sense Multiple Access with Collision Detection protocol. This protocol enables devices to check whether a given line is in use before initiating new transmissions.

Later, Ethernet hubs largely gave way to network switches. Because a hub cannot discriminate between points on a network segment, it can't send data directly from point A to point B. Instead, whenever a network device sends a transmission via an input port, the hub copies the data and distributes it to all available output ports.

In contrast, a switch intelligently sends any given port only the traffic intended for its devices rather than copies of any and all the transmissions on the network segment, thus improving security and efficiency.

Like with other network types, involved computers must include a network interface card (NIC) to connect to Ethernet.

Types of Ethernet cables

The IEEE 802.3 Working Group approved the first Ethernet standard in 1983. Since then, the technology has continued to evolve and embrace new media, higher transmission speeds and changes in frame content.

Below are some of the changes to Ethernet over time:

- 802.3ac was introduced to accommodate VLAN and priority tagging.

- 802.3af defines Power over Ethernet, which is crucial to most Wi-Fi and IP telephony deployments.

- 802.11a, 802.11b, 802.11g, 802.11n, 802.11ac and 802.11ax define the wireless equivalents of Ethernet for WLANs.

- 802.3u ushered in 100BASE-T -- also known as Fast Ethernet -- with data transmission speeds of up to 100 Mbps. The term BASE-T indicates the use of twisted-pair cabling.

Gigabit Ethernet boasts speeds of 1,000 Mbps -- 1 gigabit or 1 billion bits per second (bps) -- 10 GbE, up to 10 Gbps, and so on. Over time, the typical speed of each connection tends to increase.

Network engineers use 100BASE-T to do the following:

- Connect end-user computers, printers and other devices.

- Manage servers and storage.

- Achieve higher speeds for network backbone segments.

Ethernet cables connect network devices to the appropriate routers or modems. Different cables work with different standards and speeds. For example, Category 5 (Cat5) cables support traditional and 100BASE-T Ethernet. Cat5e cables can handle Gigabit Ethernet, while Cat6 works with 10 GbE.

Ethernet crossover cables, which connect two devices of the same type, also exist. These cables enable two computers to be connected without a switch or router between them.

Editor's note: This definition was updated to improve reader experience.