Gigabit Ethernet (GbE)

What is Gigabit Ethernet (GbE)?

Gigabit Ethernet (GbE), a transmission technology based on the Ethernet frame format and protocol used in local area networks (LANs), provides a data rate of 1 billion bits per second, or 1 gigabit (Gb). Gigabit Ethernet is defined in the Institute of Electrical and Electronics Engineers (IEEE) 802.3 standard and is currently being used as the backbone in many enterprise networks.

Gigabit Ethernet connects computers and servers in local networks. Its improvements in data transfer speed and cabling have prompted many enterprises to replace Fast Ethernet with Gigabit Ethernet for wired local networks.

Gigabit Ethernet is carried on optical fiber or copper wire. Existing Ethernet LANs with 10 megabits per second and 100 Mbps cards can feed into a Gigabit Ethernet backbone.

Newer standards, such as 10 GbE, a networking standard that is 10 times faster than Gigabit Ethernet, are also emerging. Today, data centers and enterprises have a myriad of options of Gigabit Ethernet speeds, including 10 GbE, 20 GbE, 40 GbE and 100 GbE for core switching.

How Gigabit Ethernet works

Gigabit Ethernet networks can function as half-duplex networks for shared media or as Ethernet switches with a switched full-duplex network.

Gigabit Ethernet uses the same 802.3 framing structure as standard Ethernet. It supports 1 Gb per second (Gbps) speeds using Carrier Sense Multiple Access/Collision Detect (CSMA/CD). CSMA/CD handles transmissions after a collision has occurred. The transmission rate may cause data packets to intersect when two devices on the same Ethernet network attempt to transmit data at the same time. CSMA/CD detects and discards collided data packets.

Gigabit Ethernet speeds are delivered by either copper or fiber optic cables. Fiber optic cables are needed for long-range transmissions of more than 300 meters (m). However, traditional Ethernet cables can transmit data at gigabit speeds over shorter distances -- in particular, Cat5e cables or above or the 1000Base-T cabling standard and above. Cat5e cable, for example, consists of four pairs of eight twisted wires in one cable.

Types of Gigabit Ethernet

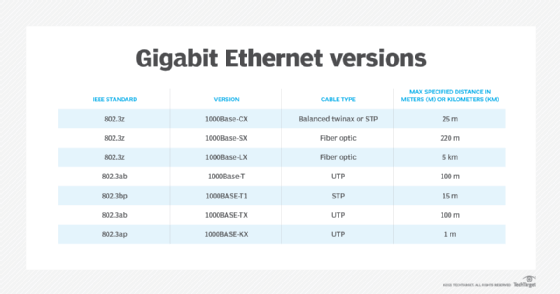

Gigabit Ethernet is implemented in different cabling physical layer standards, including the following:

- 1000Base-CX. This standard, which is used for connections up to 25 m, uses either balanced twinaxial cabling or shielded twisted pair (STP) cabling.

- 1000Base-SX. This standard, which is used for connections up to 220 m, uses fiber optic cables for short-wavelength transmissions.

- 1000Base-LX. This standard, which is used for connections up to a maximum distance of 5 kilometers (km), uses fiber optic cables.

- 1000Base-T. This standard, which is used for connections up to 100 m, uses unshielded twisted pair (UTP) copper cables with Cat5, Cat5e, Cat6 and Cat7.

- 1000BASE-T1. This standard, which is used for connections up to 15 m, uses STP copper cables.

- 1000BASE-TX. This standard, which is similar to 1000Base-T, is used for connections up to 100 m. It uses UTP copper cables. But this standard does not receive much recognition due to its cost and Cat6 and Cat7 cable requirements.

- 1000BASE-KX. This standard, which is used for connections up to 1 m, uses UTP-type cables.

Benefits of Gigabit Ethernet

Gigabit Ethernet provides the following benefits:

- Reliability. Fiber optic cables used in some gigabit internet offerings are more durable and reliable than traditional copper wiring.

- Speed. A transmission speed of 1 Gbps should be more than enough for most online applications today.

- Less latency. Reduced latency rates range from 5 milliseconds to 20 ms.

- Transferring or streaming video data. Gigabit Ethernet can smoothly stream 4K content at a high frame rate.

- Multiuser support. High-speed internet can be split into multiple tasks to support multiple devices.

History

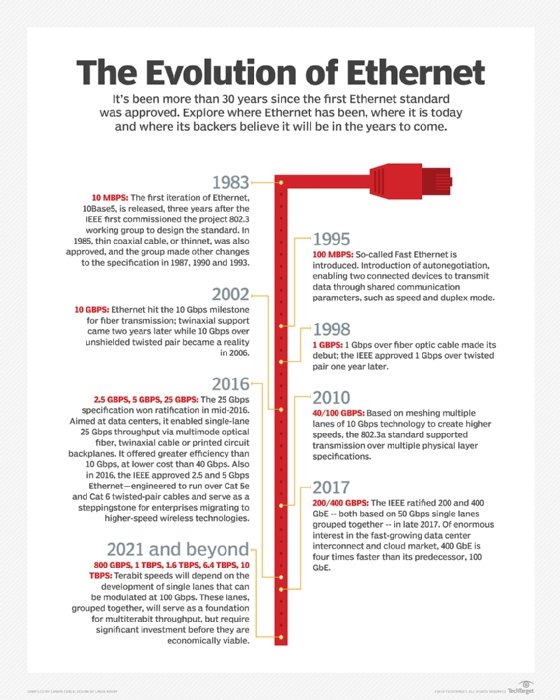

As one of the most widely used LAN technologies, Ethernet was introduced in 1973 and has evolved over the years:

- In 1995, Fast Ethernet was introduced and, as a standard, remained the fastest version of Ethernet for three years. Fast Ethernet was designed to carry traffic at a rate of 100 Mbps.

- In 1998, three years after the introduction of Fast Ethernet, Gigabit Ethernet was introduced by IEEE to replace Fast Ethernet. It provided a data rate of 1 Gb and initially required the use of fiber optic cables.

- In 1999, a new standard was passed that enabled UTP Cat5, Cat5e or Cat6 cabling to be used. This was called 1000Base-T.

- In 2002, 10 GbE was introduced.

- In 2004, the 1000BASE-LX10 and 1000BASE-BX10 standards were added.

- In 2010, a standard for 40 GbE and 100 GbE was introduced to support endpoint and link aggregation.

- In 2013, IEEE published results from an Ethernet Study Group for a 400 GbE standard.

- In 2017, IEEE ratified 200 GbE and 400 GbE, which are two times and four times faster, respectively, than 100 GbE.

- The Ethernet Alliance's technology roadmap expects Ethernet speeds of 800 Gbps to 1.6 terabits per second to become an IEEE standard between 2023 and 2025.

Learn more about 400 GbE and the effect it will have on enterprise networks in this article.