network packet

What is a network packet and how does it work?

A network packet is a basic unit of data that's grouped together and transferred over a computer network, typically a packet-switched network, such as the internet. Each packet or chunk of data forms part of a complete message and carries pertinent address information that helps identify the sending computer and intended recipient of the message.

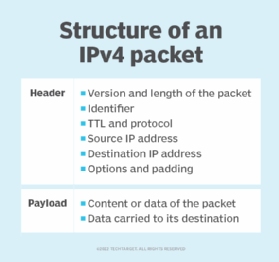

A network packet has three parts: the packet header, payload and trailer. The size and structure of a network packet are dependent on the underlying network structure or protocol used. Conceptually, a network packet is like a postal package. In this scenario, the header is the box or envelope, the payload is content and the trailer is the signature. The header contains instructions related to the data in the packet.

A network packet works by choosing the best route available to its destination This is a route taken by all the other packets within a message, making the network traffic more efficient in terms of balancing a load across various pieces of equipment. For instance, if there's an issue with a piece of equipment during message transmission, the packets are redirected through routers to ensure the entire message gets to its destination.

Generally, most networks today operate on the TCP/IP stack, which makes it possible for devices connected to the internet to communicate with one another across different networks.

What are the parts of a network packet?

Network packets are similar in function to a postal package. A network packet or unit of data goes through the process of encapsulation, which adds information to it as it travels toward its destination and marks where it begins and ends.

A network packet is made up of the following three parts:

- Packet header. The header is the beginning or front part of a packet. Any processing or receiving device, such as a router or a switch, sees the header first. The following 13 fields are included in an IPv4 protocol header:

- Version. This field indicates the format of the internet header.

- Internet header length (IHL). IHL is the length of the internet header in 32-bit words that points to the beginning of the data.

- Type of service. This indicates the abstract parameters of the quality of service desired.

- Total length. This is the length of the datagram measured in octets that includes the internet header and data. This field allows the length of a datagram to be up to 65,535 octets.

- Identification. The sender assigns an identifying value to aid in assembling the fragments of a datagram.

- Flags. These are various control flags.

- Fragment offset. This field indicates where in the datagram this fragment belongs. The fragment offset is measured in units of eight octets, or 64 bits. The first fragment has offset zero.

- Time to live (TTL). The TTL field indicates the maximum time the datagram is allowed to remain in the internet system. If this field contains the value of zero, then the datagram must be destroyed.

- Protocol. This field indicates the next-level protocol used in the data portion of the internet datagram.

- Header checksum. A checksum detects corruption in the header of the IPv4 packets.

- Source address. This is the 32-bit source IP address.

- Destination address. This is the 32-bit destination IP address.

- Options. This field is optional, and its length can be variable. A source route option is one example, where the sender requests a certain routing path. If an option is not 32 bits in length, it uses padding options in the remaining bits to make the header an integral number of 4-byte blocks.

- Payload. This is the actual data information the packet carries to its destination. The IPv4 payload is padded with zero bits to ensure that the packet ends on a 32-bit boundary.

- Trailer. Sometimes, certain network protocols also attach an end part or trailer to the packet. An IP packet doesn't contain trailers, but Ethernet frames do.

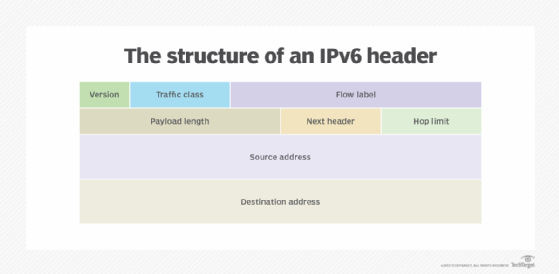

IPv6 is the newer version of IPv4, which was developed in the early 1980s. And, despite the introduction and adoption of the modern IPv6, IPv4 still routes most of today's internet traffic.

IPv6 uses different IP headers for data packets, as an IPv6 address is four times larger than an IPv4 address. It's a more streamlined version of IPv4 and provides better support for real-time traffic by eliminating the fields that are rarely used or are unnecessary.

Why use packets?

Packets are used for efficient and reliable transmission of data. Instead of transferring a huge file as a single data block, sending it in smaller packets improves transmission rates. Packets also enable multiple computers to share the same connection. For example, if one person is downloading a file, the computer can send packets to the server, while another user is simultaneously sending packets to the same server.

While it's possible to transfer data without using packets, it would be highly impractical to send the data without first slicing it into smaller chunks.

The following are some of the benefits of using packets:

- Different paths can be used to route packets to their destination. This process is known as packet switching.

- If an error occurs, the packets can be stored and retransmitted later.

- Packets use the best route available for delivery. This enables them to be routed across congested parts of the network without slowing them down in a specific spot.

- To ensure secure delivery, packets can be encrypted.

Packet switching vs. circuit switching

In the world of telecommunications, both circuit switching and packet switching are popular methods of connecting communicating devices together. However, they differ in their methodology. Packet switching is used for grouping data into packets for transmission over a digital network. It's an efficient way to handle transmissions on a connectionless network, such as the internet.

On the other hand, circuit-switched transmission is used for voice networks. In circuit switching, lines in the network are shared among many users as with packet switching. However, each connection requires the dedication of a particular path for the duration of the connection.

The following highlights the major pros and cons of both technologies.

Packet switching

- It is a connectionless service and doesn't require a dedicated path between the sender and the receiver.

- Each packet carries pertinent information, such as source, destination and protocol identifiers, which help the packet select the best available route to its destination.

- The grouping of data into packets in a packet-switched network enables interoperable networking across these different networks and devices until the packets reach the destination where the receiving hosts reassemble them to their original form. For example, a host in a packet-switched network, such as Ethernet, can send data that traverses its local network without having any information about the destination's local area network or any of the devices or networks between its LAN and the destination's LAN.

- While packet-switched networks can't guarantee reliable delivery, they do minimize the risk of data loss, as the receiving device can request the missing packet upon detection and the originating device can then resend it.

- No bandwidth reservation is required in advance, and no call setup is required.

- Protocols used in packet switching are complex. If the security protocols aren't used during packet transmission, the connection is insecure.

- Since it isn't a dedicated connection, packet switching can't be used in applications that require little delay and higher service quality.

- Packet switching is reliable, as it helps to eliminate packet loss, as data packets can be resent if they don't reach their destination.

Circuit switching

- It reserves the entire bandwidth in advance, as a connection setup is required for data transfers. The reserved bandwidth improves the quality of the connection and network performance due to the reduced congestion.

- It requires a dedicated path before the data can travel between the source and the destination, which makes it impossible to transmit other data even when the channel is free. For example, even if there's no transfer of data, the link is still maintained until it's terminated by users.

- Circuit switching is suitable for long and continuous communication due to its dedicated nature.

- A lot of bandwidth gets wasted as other senders can't use the same path during congestion.

- Circuit switching is fully transparent; the sender and receiver can use any bit rate format or framing method.

- Circuit switching is less reliable than packet switching, as it doesn't have the means to resend lost packets.

Learn how TCP/IP and the Open Systems Interconnection model differ when it comes to network communications.