Microsoft Outlook

What is Microsoft Outlook?

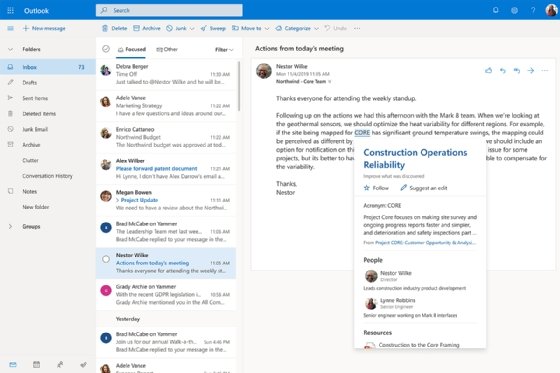

Microsoft Outlook is the preferred email client used to send and receive emails by accessing Microsoft Exchange Server email. Outlook also provides access contact, email calendar and task management features.

Microsoft Outlook may be used as a standalone application, but it is also part of the Microsoft Office suite and Office 365, which includes Microsoft Excel and PowerPoint. Outlook can be used as a standalone personal email software, and business customers can use Outlook as multiuser software. Users can integrate it with Microsoft SharePoint to share documents and project notes, collaborate with colleagues, send reminders and more.

There is a free, browser-based version of Outlook with limited features. Users who don't need the full-fledged app can opt for that version rather than a Microsoft 365 subscription.

Microsoft Outlook features

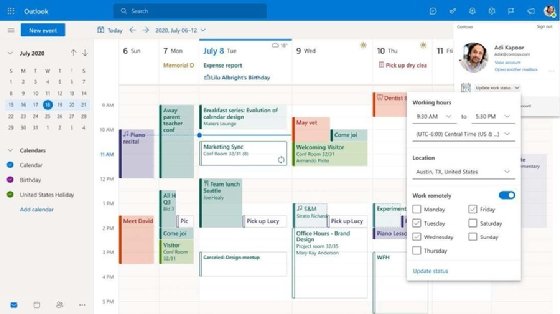

Basic features of Outlook include the email service, email search, flagging and color coding, along with preview pane options. The calendar function enables scheduling, viewing and communicating about appointments and meetings. Outlook provides 99 gigabytes of archiving data and the ability to set automatic replies.

Additional Outlook features include the following:

- Calendar sharing. Users can share calendars to see the availability of colleagues when scheduling meetings.

- @mention. If a user types @ and another user's name, Outlook will add that user to an email list, highlight the mention of that user and notify the user.

- Email scheduling. Users can write emails ahead of time and choose when to send them.

- Quick Parts. This function enables users to copy the text of one email and insert it into future messages. This feature is useful for users that have to send similar emails to a variety of users.

- New item alerts. Incoming messages overlay on the user's display, notifying them of new emails.

- Ignore messages. All messages in a conversation can be set to bypass a user's inbox and go to the deleted items folder.

- File attachment reminder. If a user mentions an attachment in an email but forgets to attach it, Outlook will ask them if they meant to include an attachment before sending the message.

- Clean Up Conversation option. Users can click a button to delete read messages, leaving only unread messages.

- Automatic calendar updates. Outlook will automatically add flight, hotel and car rental reservations to the calendar.

- Keyboard shortcuts. Some key combos available include the following:

- switch to Mail (CTRL+1)

- switch to Calendar (CTRL+2)

- switch to Contacts (CTRL+3)

- create new appointments (CTRL+SHIFT+A)

- send a message (ALT+S)

- reply to a message (CTRL+R)

Version history

Outlook was released to the public in 1997. Since then, Microsoft has updated the email client with new versions that add more features. Notable versions include the following:

- Outlook 2002 introduced email address autocomplete, group schedules, colored calendar item categories, MSN Messenger integration and consolidated reminders.

- Outlook 2003 rolled out Cached Exchange mode, colored flags, email filtering for spam, desktop alerts and search folders.

- Outlook 2007 debuted attachment previews, improved email spam filtering and anti-phishing features, along with calendar-sharing improvements.

- Outlook 2010 added notifications for when an email is about to be sent without a subject, support for multiple Microsoft Exchange accounts in one Outlook profile and the ability to schedule meetings with a reply to a sender's email. Spell check was also added in more areas of the user interface.

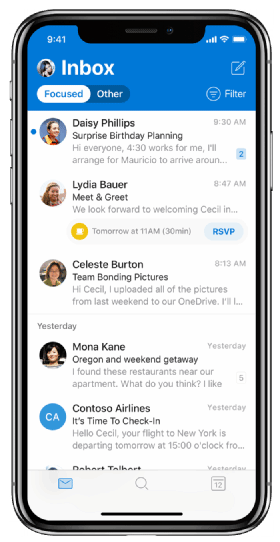

- Outlook 2019 introduced focused inboxes, automatic downloading of cloud attachments and better email sorting.

Microsoft is working on replacing its Windows 10 Mail and Calendar apps, and Win 32 Outlook client with one client for Windows and Mac. Future versions of Microsoft Outlook may include Outlook and Teams integrations. Microsoft has revealed it is working on a feature that enables users to run apps that were built for Teams in Outlook.

Learn more about Outlook features, including ones that improve the mobile user experience.