throughput

What is throughput?

Throughput is a measure of how many units of information a system can process in a given amount of time. It is applied broadly to systems ranging from various aspects of computer and network systems to organizations.

Related measures of system productivity include the speed with which a specific workload can be completed and response time, which is the amount of time between a single interactive user request and receipt of the response.

Types of throughput

Historically, throughput has been a measure of the comparative effectiveness of large commercial computers that run many programs concurrently. Throughput metrics have adapted with the evolution of computing, using various benchmarks to measure throughput in different use cases.

Batches per day and teraflops

An early throughput measure was the number of batch jobs completed in a day. More recent measures assume either a more complicated mixture of work or focus on a particular aspect of computer operation. Units like trillion floating-point operations per second (teraflops) provide a metric to compare the cost of raw computing over time or by manufacturer.

Network throughput and bits per second

In data transmission, network throughput is the amount of data moved successfully from one place to another in a given time period. Network throughput is typically measured in bits per second (bps), as in megabits per second (Mbps) or gigabits per second (Gbps).

Storage throughput and bytes per second

In storage systems, throughput refers to either of the following:

- the amount of data that can be received and written to the storage medium; or

- the amount of data read from media and returned to the requesting system.

Storage throughput is typically measured in bytes per second (Bps). It can also refer to the number of discrete input or output (I/O) operations responded to in a second, or IOPS.

Transactions per second

Throughput applies at higher levels of IT infrastructure as well. IT teams can discuss databases or other middleware with a term like transactions per second (TPS). Web servers can be discussed in terms of pageviews per minute.

Throughput also applies to the people and organizations using these systems. Independent of the TPS rating of its help desk software, for example, a help desk has its own throughput rate that includes the time staff spend on developing responses to requests.

Throughput, bandwidth and latency

Several related terms -- throughput, bandwidth and latency -- are sometimes mistakenly interchanged. Network bandwidth refers to the capacity of the network for data to be moved at one time. Throughput expresses the amount of data. Latency refers to the speed at which data is transmitted.

Network throughput and latency together reflect a network's performance.

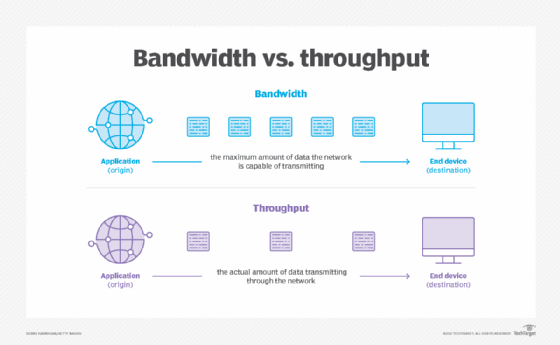

Throughput vs. network bandwidth

Bandwidth is the capacity of a wired or wireless network communications link to transmit the maximum amount of data from one point to another over a computer network or internet connection in a given amount of time -- usually one second. Synonymous with capacity, bandwidth describes the data transfer rate. Bandwidth is not a measure of network speed -- a common misconception.

While bandwidth is traditionally expressed in bits per second, modern network links have greater capacity, which is typically measured in millions of bits per second (Mbps) or billions of bits per second (Gbps).

Throughput is necessarily lower than bandwidth because bandwidth represents the maximum capabilities of a network rather than the actual transfer rate.

Throughput vs. network latency

Network latency is an expression of how much time it takes for a data packet to get from one designated point to another. In some environments, latency is measured by sending a packet that is returned to the sender -- the round-trip time is considered the latency. Ideally, latency is as close to zero as possible.

Contributors to degraded network throughput include the following:

- Hardware issues. Routers and other devices are antiquated or experience faults.

- Traffic. If network traffic is heavy, it can result in packet loss.

Contributors to network latency include the following:

- Propagation. This is the time it takes for a packet to travel between one place and another at the speed of light.

- Transmission. The medium itself (whether optical fiber, wireless or something else) introduces some delay, which varies from one medium to another. The size of the packet introduces delay in a round trip because a larger packet takes longer to receive and return than a short one. Also, when a repeater is used to boost signals, this too introduces additional latency.

- Router processing. Each gateway node takes time to examine and possibly change the header in a packet -- for example, changing the hop count in the time-to-live field.

- Computer and storage delays. Within networks at each end of the journey, a packet might be subject to storage and hard disk access delays at intermediate devices, such as switches and bridges. Backbone statistics, however, probably don't consider this kind of latency.

How to measure and monitor network throughput

Various passive and active tools are available to measure and monitor network traffic. These tools include Simple Network Management Protocol (SNMP), Windows Management Instrumentation (WMI), tcpdump, Wireshark and others.

SNMP is an application-layer protocol used to manage and monitor network devices and their functions. SNMP provides a common language for network devices to relay management information within single- and multivendor environments in a local area network (LAN) or wide area network (WAN). The most recent iteration of SNMP, version 3, includes security enhancements that authenticate and encrypt SNMP messages, as well as protect packets during transit.

WMI is a set of specifications from Microsoft for consolidating the management of devices and applications in a network from Windows computing systems. WMI provides users with information about the status of local or remote computer systems. It also supports the following actions:

- configuration of security settings;

- setting and changing system properties;

- setting and changing permissions for authorized users and user groups;

- assigning and changing drive labels;

- scheduling processes to run at specific times;

- backing up the object repository; and

- enabling or disabling error logging.

Tcpdump is an open source command-line tool for monitoring, or sniffing, network traffic. Tcpdump captures and displays packet headers and matches them against a set of criteria. It understands boolean search operators and can use host names, IP addresses, network names and protocols as arguments.

Wireshark is another open source tool that analyzes network traffic. It can look at traffic details, such as transmit time, protocol type, header data, source and destination. Network and security teams often use Wireshark to assess security incidents and troubleshoot network issues.